There is a version of this story in which artificial intelligence predicting Supreme Court decisions is a triumph of data science. Feed the model enough decisions, enough oral argument transcripts, enough voting records, and it learns the pattern. It gets the answer right. The technology works.

Justice Sonia Sotomayor, speaking to students at the University of Alabama School of Law, had a different read. AI models that successfully anticipate how the high court will rule are, she told them, "a very bad thing." "It shows we're way too predictable," she said. The remark was framed as a concern about judicial independence — the worry that a court whose outcomes can be forecast like weather is a court that has stopped actually deciding cases.

That framing is correct. But it stops too early. The deeper problem is not that the court is predictable. The problem is why it is predictable — and what that tells us about the institution's claim to be doing law at all.

When a machine learning model predicts how a justice will vote, it is not reading the law. It is reading the justice. It is tracking ideological consistency across thousands of decisions, identifying which way a given jurist breaks when a certain set of facts meets a certain set of legal questions. The model does not care about the Constitution. It cares about the pattern. And if the pattern is reliable enough to forecast — reliably enough that researchers have built models with accuracy rates well above chance — then the pattern is the decision. The legal reasoning is downstream of the outcome, not upstream of it.

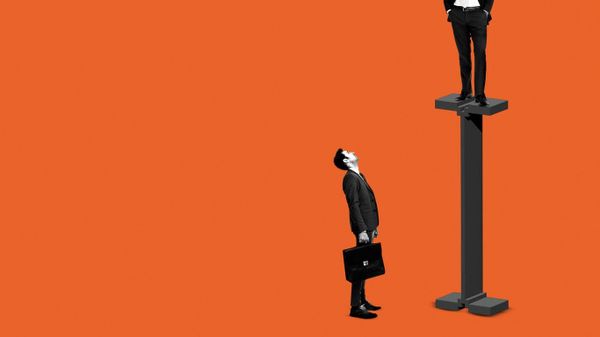

This is the thesis Sotomayor gestured toward without quite stating: a court that algorithms can predict is a court that is not interpreting law. It is expressing ideology. The two are not the same thing, and the entire legitimacy of the federal judiciary rests on the distinction.

The accountability question here is structural. Federal judges are appointed for life. They are not elected. They are not subject to recall. The constitutional justification for this extraordinary insulation from democratic pressure is that judges apply law — that they are constrained by text, precedent, and legal principle in ways that make their personal politics irrelevant. If that claim were true, you would not be able to build a model that predicts their votes by tracking their ideology. The model's success is evidence that the claim is, at minimum, substantially false.

This is not a new observation in legal academia. The legal realist movement, dating to the early twentieth century, argued that judges' decisions are shaped by their social backgrounds, values, and political commitments as much as by formal legal doctrine. What is new is that machine learning has turned that theoretical argument into a measurable, falsifiable, and increasingly confirmed empirical finding. The algorithm is not making a claim about judicial philosophy. It is running a regression. And the regression keeps coming back with the same answer: you can predict the vote from the judge.

The systemic pattern here extends well beyond any individual justice. The Supreme Court's current majority was assembled with extraordinary deliberateness by the Federalist Society and a network of conservative legal organizations that spent decades identifying, cultivating, and positioning ideologically reliable candidates for the federal bench. That project — documented in exhaustive detail and never seriously disputed — was premised on exactly the kind of predictability that Sotomayor now laments. The goal was not to install brilliant legal minds who might surprise you. The goal was to install jurists whose outcomes on abortion, guns, administrative power, and voting rights could be anticipated in advance. The AI models are just confirming that the project succeeded. As Tinsel News has covered in the context of voting rights cases before the current court, the pattern of outcomes on democracy-adjacent questions has been strikingly consistent with a specific ideological program — not with a neutral reading of constitutional text.

There is a counter-argument worth taking seriously: that all judges have values, that perfect value-neutrality is impossible and probably not even desirable, and that what matters is whether the legal reasoning is rigorous and transparent — not whether the outcome could have been predicted. This is a real position, held by serious people. But it does not survive contact with the AI finding. If values merely influenced the margins of close cases, prediction models would perform modestly better than chance. They do not perform modestly better than chance. They perform well enough to be commercially useful, well enough that legal practitioners are beginning to incorporate predictive analytics into litigation strategy. That level of predictability does not describe a court that is occasionally nudged by values. It describes a court that is, in a meaningful sense, running on them.

The power and money dimension of this story is worth naming directly. Predictive judicial analytics is now a product. Legal technology firms sell access to models that forecast not just Supreme Court outcomes but circuit court decisions, specific judges' rulings on motions, likely damages in tort cases. The clients are law firms, corporations, and financial institutions — entities with the resources to pay for predictive advantage in litigation. A hedge fund can buy a model that tells it how a regulatory challenge to a financial rule is likely to come out. A pharmaceutical company can assess its odds before deciding whether to fight an FDA enforcement action. The asymmetry is not incidental. When judicial outcomes are predictable and that predictability is commodified, the parties with resources to purchase the forecast gain a structural advantage over those without. The courthouse remains formally open to everyone. The edge belongs to the well-capitalized.

This connects to a broader pattern in how the legal system processes power. The Supreme Court's legitimacy depends on a public belief that its decisions emerge from law rather than politics — that the nine justices are doing something categorically different from what elected officials do. That belief has been eroding for years, through confirmation battles that are openly ideological, through decisions that track partisan outcomes with suspicious consistency, through the collapse of any pretense that the confirmation process is about legal qualifications rather than political reliability. The AI prediction story is not the cause of that erosion. It is a precise, quantified measure of how far it has gone. As Tinsel News has noted in coverage of the constitutional questions now before the court, the stakes of who controls that institution — and how predictably they vote — have rarely been higher.

Sotomayor's discomfort is understandable, and her instinct is correct. But there is something worth examining in the framing of her concern. She said the predictability shows the court is "not stepping" — the full quote was cut off in the source material, but the implication is that the justices are not stepping outside expected patterns, not surprising anyone, not demonstrating the kind of independent legal judgment that would make prediction harder. That is a diagnosis of the problem. What it is not is an account of how the problem was created, who created it, or what the consequences are for the millions of people whose rights and lives are shaped by a court whose outcomes were, in important respects, determined before the cases were filed.

The algorithm did not make the Supreme Court ideologically predictable. It just made that predictability legible — precise enough to measure, reliable enough to sell, and undeniable enough that even a sitting justice has to acknowledge it out loud. What happens next depends on whether the institution treats that acknowledgment as a warning or a weather report. The 9-8 Fifth Circuit ruling on Ten Commandments in Texas classrooms is one recent illustration of how thin the margin between law and ideology has become — and how much the composition of a court determines the outcome before arguments begin.